This page is not an official page of the app or its developer, but an independent editorial publication created for informational and commentary purposes. Unless expressly stated otherwise, neither the app nor its developer is affiliated with, endorsed by, sponsored by, authorized by, or otherwise officially connected with MWM, Apple, Google Play, the app publisher, or the app's developer, and nothing on this page implies that the app was developed using MWM's services. Any trademarks, logos, screenshots, and other content remain the property of their respective owners.

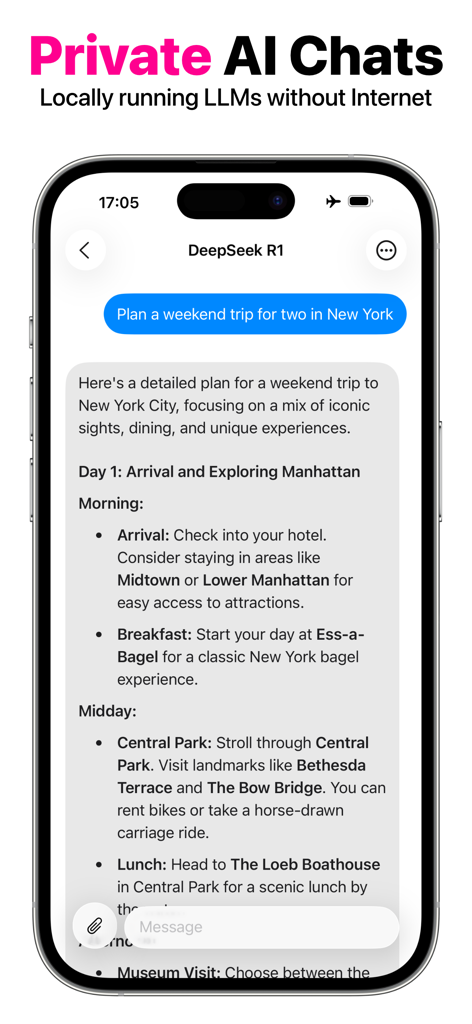

OfflineLLM: Private AI Chat

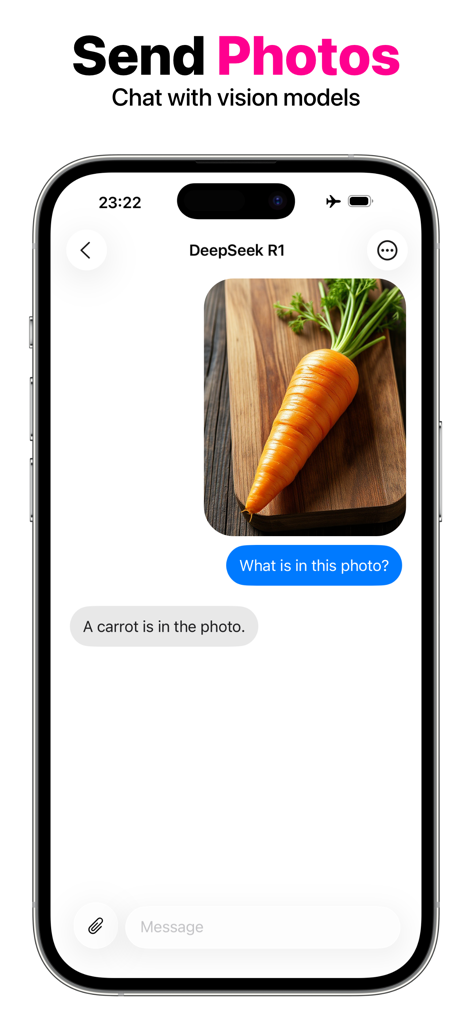

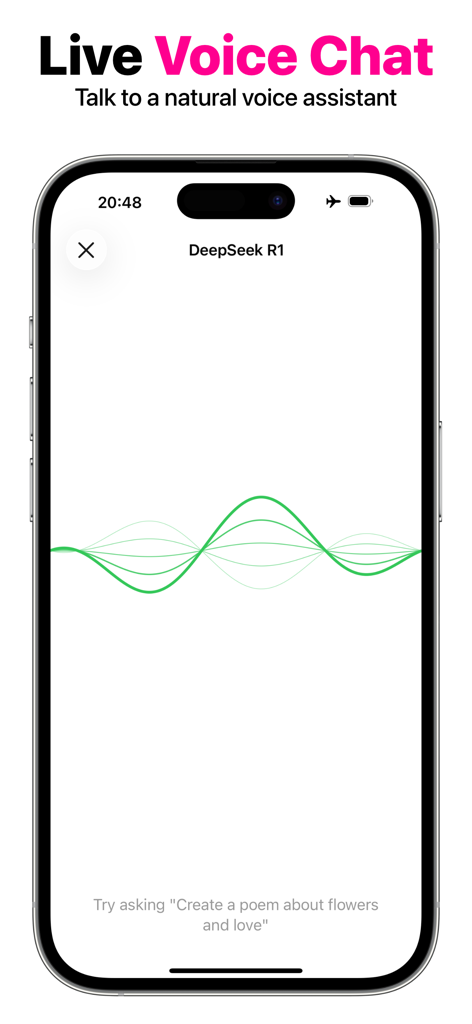

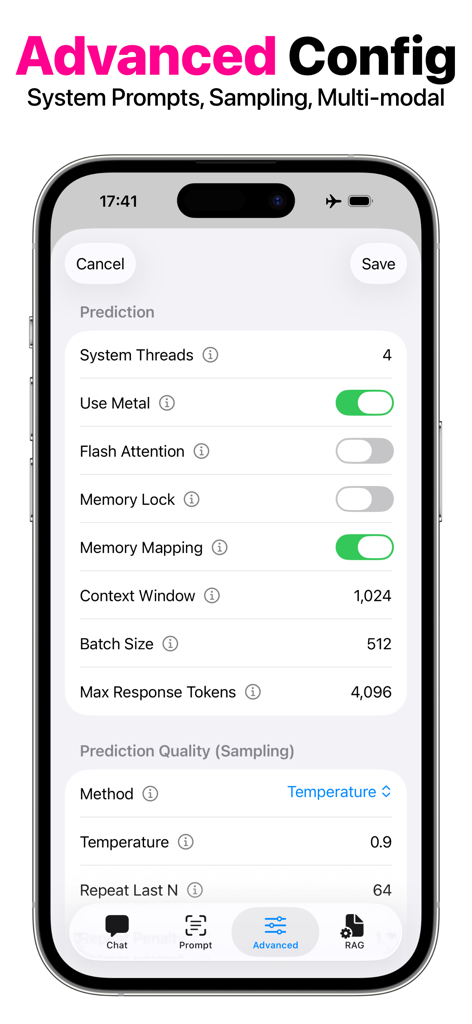

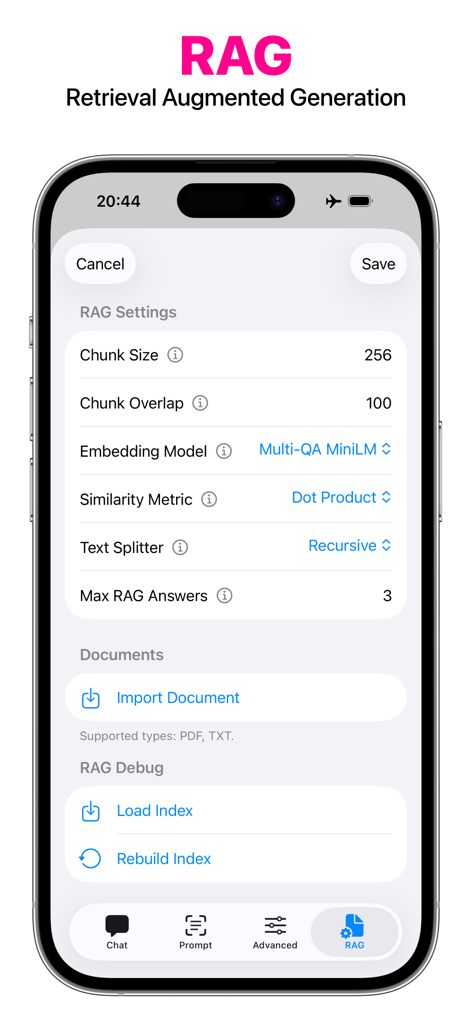

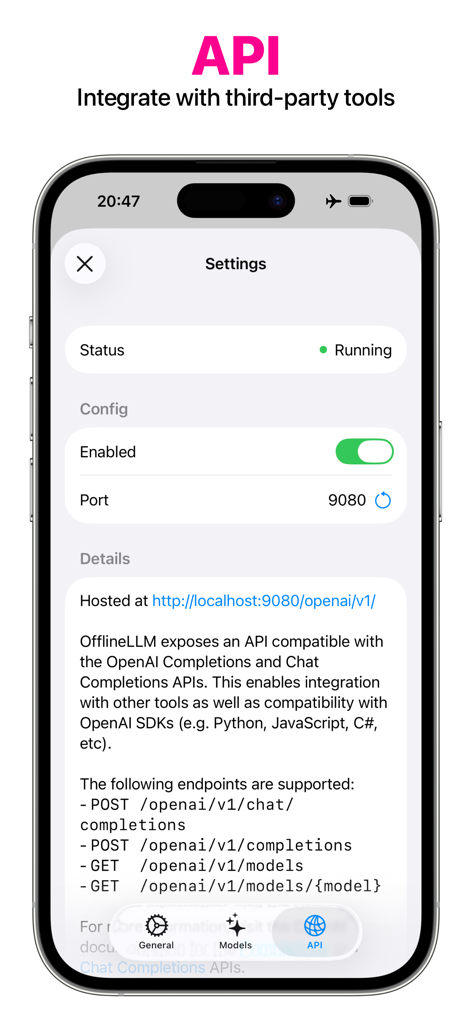

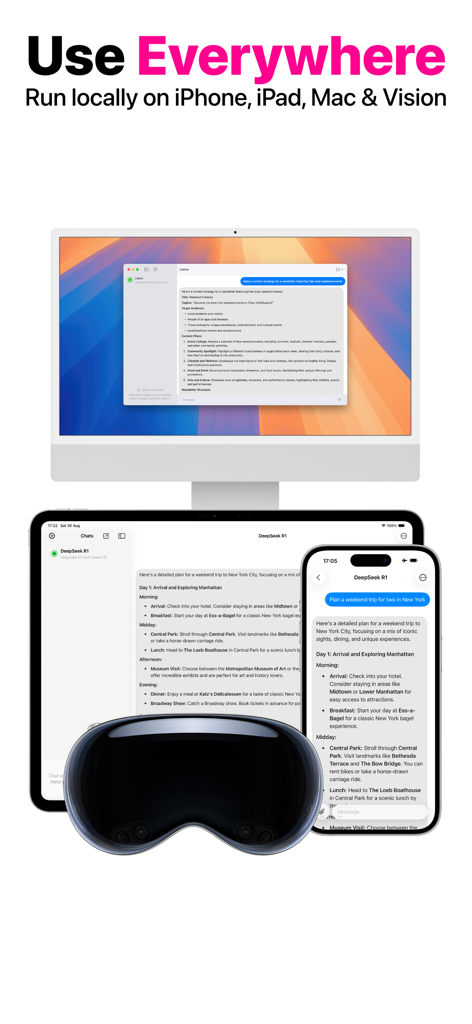

Secure your data sovereignty with the fastest offline AI engine. Harness Llama, DeepSeek, and Mistral locally on your iPhone, Mac, and Vision Pro with Metal 3 performance, RAG support, and zero cloud dependency.

Downloads

5K+User Rating

Total Ratings

0Publisher

Category

ProductivityLocales

2Latest Version

4.0.2Size

526.8 MBFirst Released

Dec 19, 2023Uncompromising Privacy, Desktop-Class Performance

Harness the power of local LLMs on your Apple devices. No internet, no tracking, and no data leaks—just pure, private intelligence optimized for Apple Silicon.

Zero-Cloud Data Sovereignty

Everything stays on-device. Process confidential legal briefs, proprietary code, or personal records with the absolute certainty that your data is never used for training or stored on a remote server.

Metal 3 Native Optimization

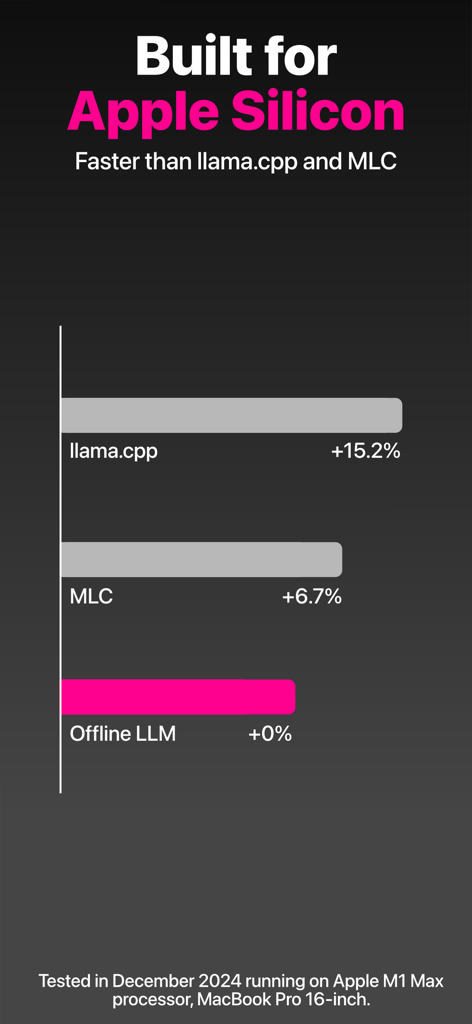

Engineered for maximum throughput on M-series chips. Outperforms standard implementations by leveraging a custom execution engine designed for fluid, real-time responses on iPhone, iPad, and Mac.

More Like This

Top-ranked apps in the same category

ChatGPT

OpenAI OpCo, LLC

Google Gemini

Google LLC

Grok

X.AI Corporation

Gmail - Email by Google

Google LLC

千问 - 阿里最强大模型官方AI助手

Shanghai Zhixin Puhui Technology Co., Ltd.

Google Drive

Google LLC

Microsoft Authenticator

Microsoft Corporation

Google Sheets

Google LLC

Google Docs

Google LLC

This page is not an official page of the app or its developer, but an independent editorial publication created for informational and commentary purposes. Unless expressly stated otherwise, neither the app nor its developer is affiliated with, endorsed by, sponsored by, authorized by, or otherwise officially connected with MWM, Apple, Google Play, the app publisher, or the app's developer, and nothing on this page implies that the app was developed using MWM's services. Any trademarks, logos, screenshots, and other content remain the property of their respective owners.