This page is not an official page of the app or its developer, but an independent editorial publication created for informational and commentary purposes. Unless expressly stated otherwise, neither the app nor its developer is affiliated with, endorsed by, sponsored by, authorized by, or otherwise officially connected with MWM, Apple, Google Play, the app publisher, or the app's developer, and nothing on this page implies that the app was developed using MWM's services. Any trademarks, logos, screenshots, and other content remain the property of their respective owners.

ai.local

Harness the full power of your iPhone 15 Pro or 16 to run advanced language models 100% offline. Enjoy an Ollama-style API, total data sovereignty, and zero-latency AI—no subscriptions, no tracking, just local intelligence.

Downloads

2K+User Rating

Total Ratings

0Publisher

Category

Developer ToolsLocales

1Latest Version

1.0Size

27.2 MBFirst Released

Mar 17, 2025Pro-Grade AI, Built for Privacy

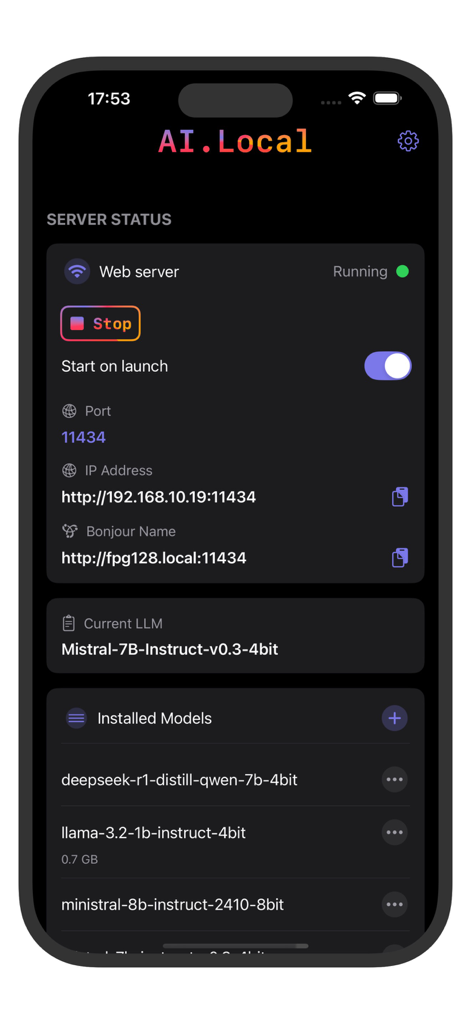

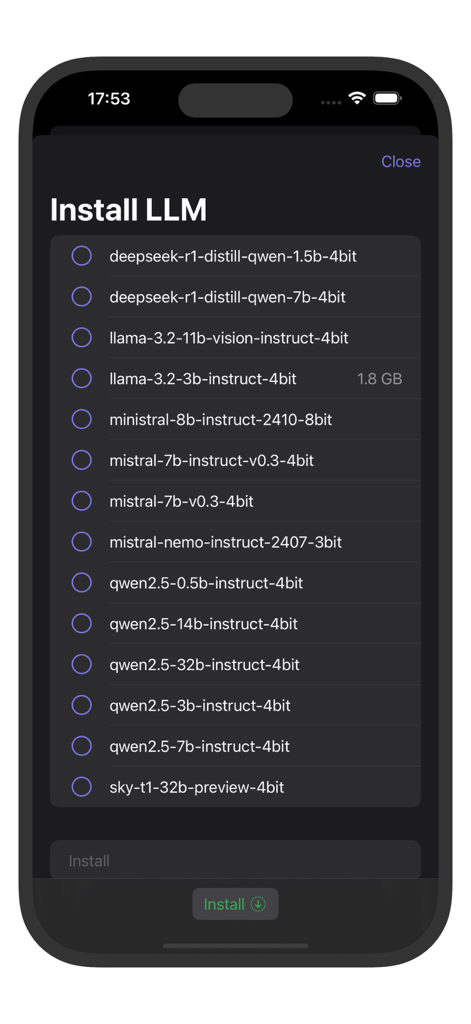

Transform your iPhone into a powerful local LLM server. Experience lightning-fast inference without the cloud, subscriptions, or data tracking.

Absolute Data Sovereignty

Run advanced models locally on your NPU. Your prompts and data never leave your device, ensuring total privacy for sensitive work.

Ollama-Compatible API

Built for developers. Seamlessly connect your favorite mobile UIs and automation scripts using the industry-standard local API.

More Like This

Top-ranked apps in the same category

TestFlight

Apple Inc.

Replit: Vibe Code Apps

Replit, Inc

GitHub

GitHub

O-KAM Pro

Shenzhen VeePai IoT Intelligent Technology LLC

Apple Developer

Apple Inc.

Expo Go

650 Industries, Inc.

Scriptable

Simon Stovring

App Store Connect

Apple Inc.

Sekai: Create · Remix · Play

Versa AI, Inc.

This page is not an official page of the app or its developer, but an independent editorial publication created for informational and commentary purposes. Unless expressly stated otherwise, neither the app nor its developer is affiliated with, endorsed by, sponsored by, authorized by, or otherwise officially connected with MWM, Apple, Google Play, the app publisher, or the app's developer, and nothing on this page implies that the app was developed using MWM's services. Any trademarks, logos, screenshots, and other content remain the property of their respective owners.